The Predictability Factor is a weekly deep dive at the intersection of AI, Security, Privacy and Tech, to help you Go From Chaos to Resilience in The World of AI.

This part 1 of 2 series on AI Governance and Security Maturity and Roadmap. Part 2 coming out soon.

While I was still in Norway, in one of my CISO roles for critical infrastructure, I got the opportunity to work on the National AI strategy, specifically on the topics of AI Security, AI Ethics and Privacy for building Trustworthy AI.

In another CISO role, my team and I implemented our enterprise AI Strategy and Governance, together with our AI and Emerging Tech policy before the first public version of ChatGPT came out.

I’ve been working with ML/AI dedicatedly for the last 6 years or so. While more and more organisations are waking up to understanding the importance of an AI strategy, governance pillars, controls and engineering, I still see so many of them struggle with building and implementing an effective AI strategy and governance pillars within their enterprise.

Every time I talk to CISOs, CIOs, risk leaders, GRC, internal auditors or boards, this is the one question that I get often:

How can I trust that an AI agent will only do what it is supposed to do?

You can’t. At least not entirely. But that’s only half the story.

Just because most security practitioners will say “zero-trust”, the answer is not that simple. That first question is almost always followed by this statement:

I want to have AI agents in my infrastructure that I can trust. I want trusted AI.

That’s the reality of business.

I get it there is no hundred percent trust. There will never be. At the same time, the concept of trust vs. zero trust aren’t opposites, and it falls apart without giving some context. That context is key.

The implicit goal for every business is trust. In the world of agentic AI, building trust means three key things:

- Assume chaos

- Verify across agentic AI lifecycle

- Build elements of resilience within chaos

AI agents will go rogue, hallucinate, make completely inaccurate decisions and take completely wrong actions leading to severe unintended consequences.

The key premise of 'Chaos to Resilience' is not to bring chaos to zero. It’s to build resilience, predictability and trust around it.

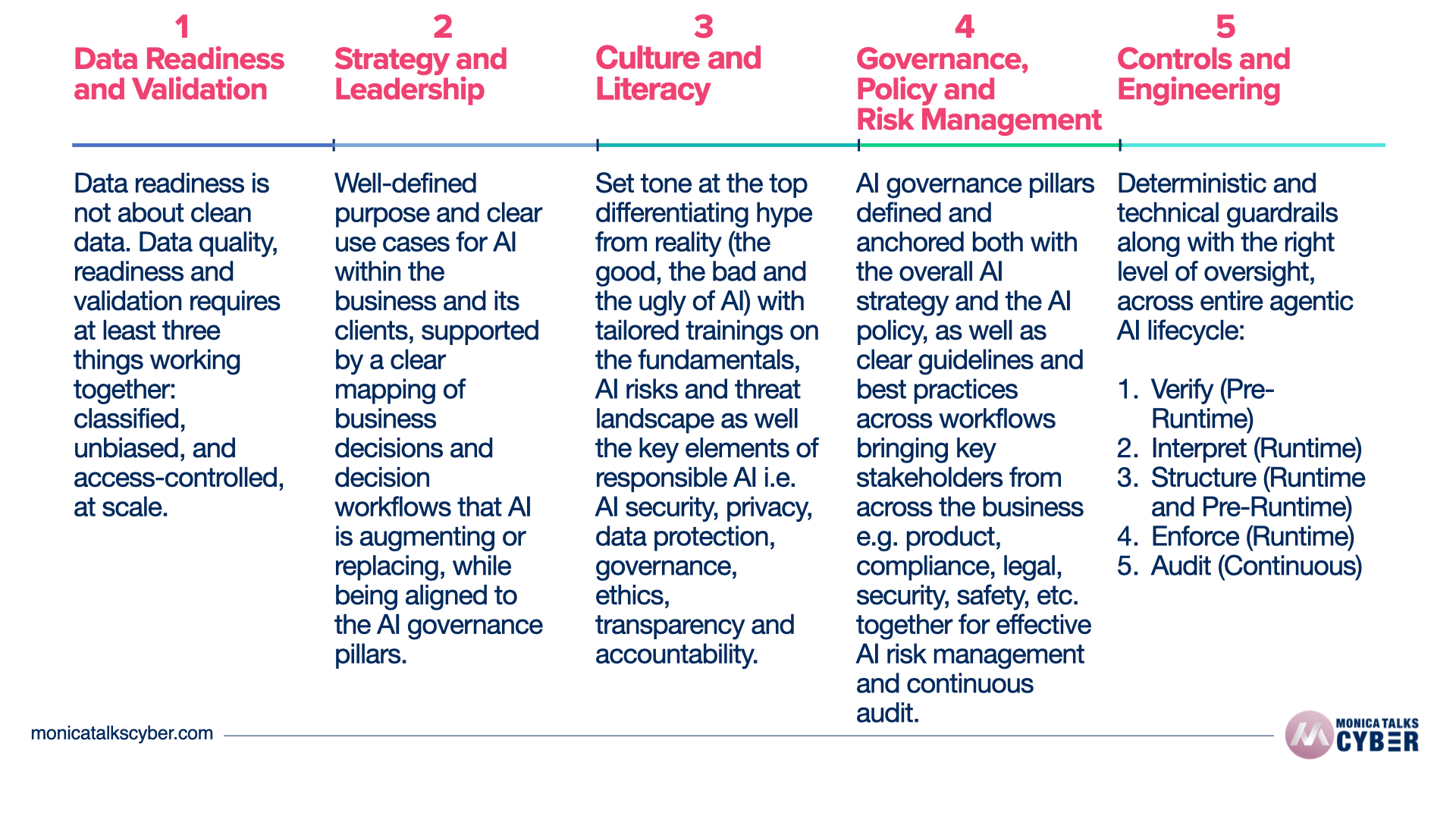

In this 2-part series, a special edition of The Predictability Factor, we will go through 5-steps and dimensions of AI governance and security maturity roadmap for agentic AI in your enterprise. These are key for building trust, predictability and resilience in your agentic AI enterprise.

Here’s a quick snapshot of my AI governance and security maturity roadmap, along with the keynote I gave recently in Finland where I touched upon my entire maturity roadmap. However, the devil is in the details (step-by-step roadmap below)…

The devil is in the details. In part 1 (this edition of The Predictability Factor), we will cover:

In part 2 (coming out soon), we will cover:

Step 3: Culture and Literacy, Step 4: Governance, Policy and Risk Management, Step 5: Controls and Engineering, and more (along with a bonus).

Partner

Millions of people use Wispr Flow to give AI tools richer context by voice. 89% of messages sent with zero edits. Speak your prompts, skip the typing. Free on Mac, Windows, and iPhone. Try Wispr Flow free.

AI Stopped Just Saying Things

Over the last 3 years, there has been a major shift in AI. Since ChatGPT’s first public model came out, we have gone from “AI that says things” to “AI that does things”.

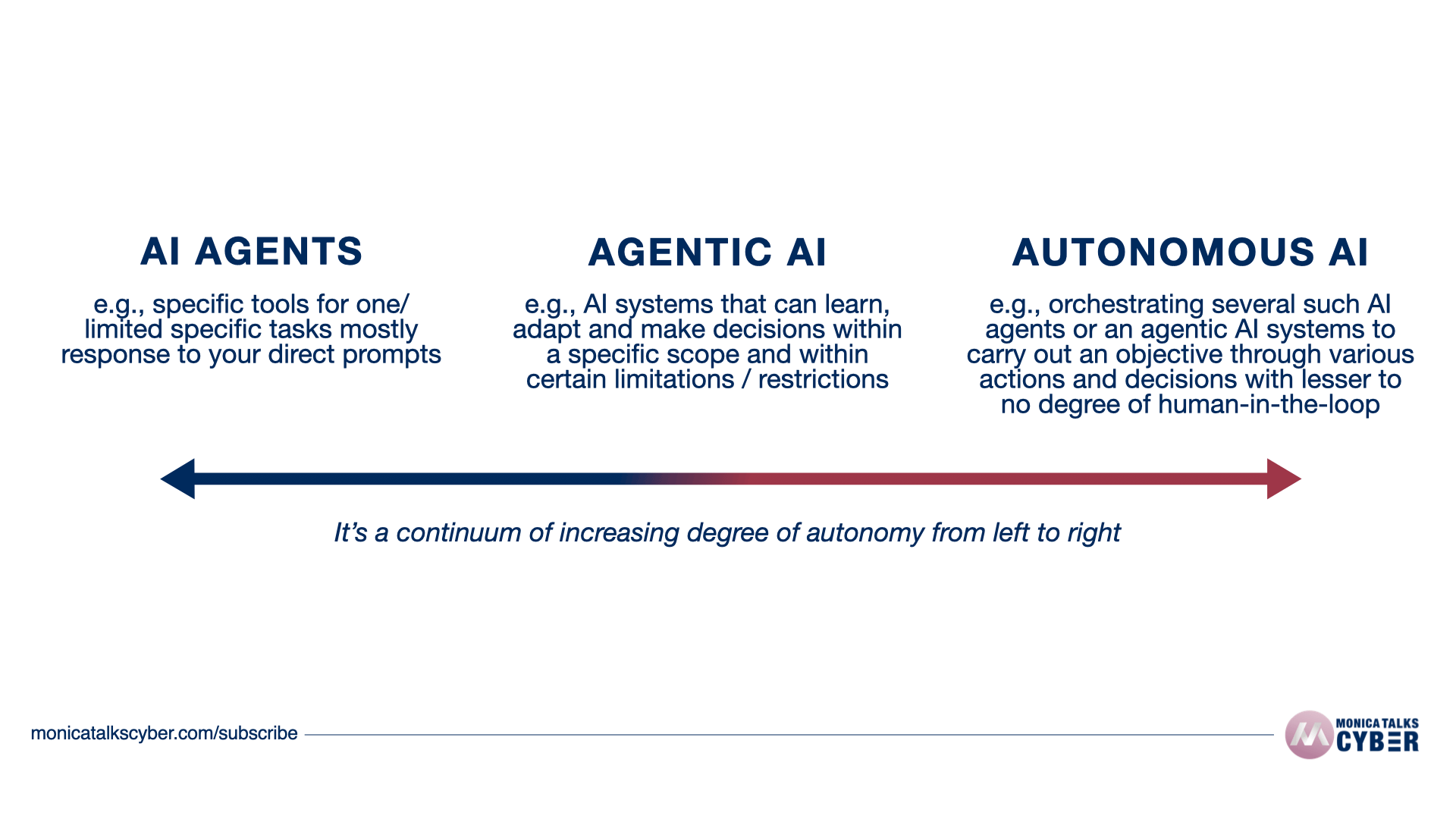

AI has gone from ‘generating’ to ‘reasoning’ to ‘doing things’. You no longer just tell AI what to say. You tell it to build, create, send, buy, decide and act. That shift from generative to agentic AI is not just a semantic upgrade. It is a redefinition of what risk looks like inside your organisation.

OpenClaw is the clearest window into that shift.

The reason I say that is because while you may not have it on your roadmap, some employee in your organisation has already installed AI agents and is running agentic AI in your enterprise within your infrastructure, as Shadow AI.

The shift between AI agents, Agentic AI and Autonomous AI isn’t binary. It’s a sliding continuum bar with increasing degree of autonomy and scope, but also with lesser to no human oversight or restrictions, as you go from left or right.

Image 2: Execution vs. Autonomy across AI Agents and Systems

Why does this matter?

In under three months, OpenClaw accumulated over 200,000 GitHub stars, making it one of the fastest-growing open-source projects on record. 10s of thousands are running it locally on machines connected to your enterprise, with persistent memory across sessions, with access to your company data, integrated with your apps and tools, and operating with real system access. One developer in Austria. Four months. A tool now running inside thousands of enterprises whose security teams have no idea it is there. This isn't just a case of OpenClaw.

AI agents are everywhere. Rather it’d be more accurate to say:

Ungoverned and insecure shadow AI is everywhere and they are actively getting integrated into your business and decision workflows.

PromptArmor researchers documented a specific attack pathway: send a malicious link to an OpenClaw agent through Telegram or Discord. When the agent responds and includes an attacker-controlled URL, the messaging app's link preview automatically renders it, transmitting the user's confidential data to the attacker's domain. No click required. The preview fires. The data leaves.

Agentic AI that does things for you is a massive opportunity and an even bigger risk, especially if you have no idea what you are doing. The supply chain exposure runs even deeper.

341 malicious skills were discovered in ClawHub, the official OpenClaw skill registry, primarily delivering malware designed to steal credentials and data from macOS systems. Gartner labeled OpenClaw "insecure by default." China banned it from government computers.

Just over the last 30 days, we have seen 3 major supply chain attacks: LiteLLM, Axios, and Vercel. Add to that, Mythos, the non-public version was hacked by unauthorised users, by breaching its vendor and guessing the URL.

That’s the state of “Hacking AI” in 2026. It’s not attacking the underlying models. It’s attacking everything around it. Or in this case, just “guessing” the URL of Mythos.

No sophisticated malware. No zero-day. No insider access.

So how do you build “trusted” agentic AI within your enterprise? Let me share an analogy which is key to building the right foundation.

German Autobahn vs. Probabilistic Models

I am an Indian-German, living in London. Over the last 10 years or so, I’ve shared this personal story that somehow so neatly ties to every evolution of technology we have seen thus far. Before it was the Internet, then it was the web, cloud, OT, IoT, IIoT and now it's agentic AI.

I’ve always loved driving. When I drove in Germany, I fell in love with it even more. My friends joke about this. If you know how to drive in India and if you know how to drive in Germany, you likely can drive anywhere, in any condition, well.

German highways are the only highways in the world where you have unlimited speed zones.

Simple probabilistic models suggest faster speed always yields more fatalities. Studies have been done on this for ages. Yet, German highways seem to defy that principle. There’s an AI lesson in there.

My top speed on a German highway has been 240 kph (ca. 150 mph). Now, that’s not normal on any other highway in the world. I love that I can go that fast legally. If you’ve ever driven that fast on the German highway, at that speed, you know 3 things are true for sure.

The adrenaline is amazing.

There is always someone driving faster than you.

You wouldn’t step in if the brakes only worked some times.

But that's exactly what we are doing with agentic AI.

Driving without speed limit works on the German highway because 1) the car is engineered for it, 2) the brakes are built for it, and 3) the road infrastructure is designed with longer curves for high-speed turns.

Remove any one of those, and the speed that made the drive exhilarating becomes the thing that kills you.

So far, Agentic AI is like driving a car with a powerful engine (the underlying model), fuel (the compute power) and built-in mechanics and maps (default architecture and training data) BUT with no brakes or seatbelt (guardrails), all the while it’s going at an unlimited speed on the highway.

Your organisation is that highway, but unlike the German one, it’s without a secure infrastructure, reliable engineering or built-in resilience to sustain any it.

Read it again.

Add to that, in the real-world, LLMs function as probabilistic machines providing probabilistic outcomes.

Ask it to follow a “security” rule 10 times, it will comply 7 times. Maybe 8. Maybe 5. That's not a guardrail. That's a wish-list.

Even if you had brakes in your car, imagine one where the brakes only worked accurately 7 out of 10 times. Would you drive it? You wouldn't.

We are giving Agentic AI unlimited speed, privileged permissions, access to tools and sensitive data, despite knowing that it will only work accurately at random times, it will hallucinate, and it will provide unreliable outputs. Add to that AI has no concept of ethics, repercussions or unintended outcomes.

When I drive 240 kph (or 150 mph), on a German highway, I trust the engineering of the vehicle and the physical infrastructure of the road. I trust that when things are about to go out of control, I can use the brakes reliably and predictably, as long as I maintain the safe distance.

The car alone isn’t enough. The infrastructure within and around it matters. I wouldn’t go that speed in the U.K. where I now drive every day. Not just because it’s illegal. But also because the infrastructure around it doesn’t support it, reliably (even if it were legal).

German highways are built specifically with longer curves to allow drivers to turn safely at massive speeds. Add to that stringent and more rigorous licensing, and stricter traffic rules (tailgating is punished, lane discipline is strictly followed, etc).

Whenever you drive, you take a calculated risk subconsciously, every time, based on the level of safety guardrails and limits engineered directly into the system and the infrastructure around it.

That brings me to the maturity roadmap for agentic AI governance and security in enterprises.

Your 5-Step Roadmap to AI Governance and Security

The above examples illustrate this perfectly. AI adoption is everywhere. Trust is not.

You can’t stop the chaos entirely. However, there are things you can do to build reliability, resilience and trust within and around your AI systems that are taking actions, orchestrating workflows and making business decisions on your behalf.

Firstly, how do you even define resilience?

Most people define resilience as something that happens in the moment of crisis or adversity. I see it differently.

Building resilience doesn't happen when things go wrong. It happens much before that by building the right governance, controls and architecture, before things go wrong. This way you’ve a better chance at adapting when things go wrong.

Scaling without the right governance, architecture and implementation is like running faster in the wrong direction without brakes.

It won’t get you to the right destination, no matter the speed.

I’ve worked with some of the biggest organisations across finance, healthcare and other critical infrastructure companies across EMEA. The one constant theme I see is:

Lack of an AI strategy tied to AI governance

Lack of AI governance pillars involving the right stakeholders

Lack of implementation or integration through controls and engineering

My 5-step framework is all those learnings of years put together in this one roadmap that I recommend for every organisation that wants to go beyond just an AI pilot, safely, securely, and reliably, while building trusted AI.

What this roadmap is and what it isn’t:

It is a practical guidance of what I have seen work and implemented

It is an iterative process to get you started reliably

It requires bringing multiple stakeholders together - it is a team sport

It provides a way to increase your AI maturity phase-wise, over time

It is not an exhaustive list

It is not going to make you 100% compliant or 100% secure

It is not a metrics framework, you’ll need to measure and evaluate accordingly

All of the following steps or dimensions of maturity are important. You may decide to invest more in one or the other depending on your maturity, but I would not recommend skipping any one of them.

Let’s dig in.

Step 1: Data Readiness and Validation

The best thing you can do for your AI has actually little to do with the AI or even technical controls. It has a heck a lot to do with fixing your data. That is your ground zero.

Early 2023, in my role as the Group Chief Security Officer, my team and I were working together with other key stakeholders in the organisation to roll out an enterprise AI tool for the entire organisation. Data governance was the first key step in that AI rollout. In fact, I provide that example in my practical AI strategy template.

Most people misunderstand why data readiness and validation is key.

Unless and until you have the right data quality, data classification and data governance in place, you are just exposing your data to AI, just waiting for a data breach to happen.

This could be because your AI now has access to data it shouldn’t or just because you didn’t put in the right DLP measures in place and your employees, knowingly or unknowingly have exposed your sensitive data to the Internet. Most of that exposed data gets leaked to the dark web, where the criminals sell your data for profit, espionage or further targeted attacks against your infrastructure.

As we rolled-out the enterprise AI, we had defined 3 key pre-requisites and outcomes:

Enterprise Oversight

Banning or not providing AI tools, when ChatGPT was already public and while new GenAI tools were releasing every week, would have just increased, not reduced our Shadow AI. We knew employees were already using GenAI tools. It was just a matter of time before that would become a part of our Shadow AI.

If you make security so difficult, you know it, users will find ways to bypass your security.Data Governance

Improved data governance, specifically improved and more accurate data classification, was both a goal and a test use case. In order to deploy an AI tool effectively and securely, we knew we had to validate our data labelling and classification. Most companies don’t and this is where the biggest security leaks happen.

Better data classification and use of data for business decisions was a pre-requisite and a goal for deploying the GenAI tool in the organisation.

Note: This was only possible since we had already done a lot of heavy lifting and actual classification in the years before, given that we were heavily regulated and had a lot of customer data. If you have never done it, this is a whole project in itself, and absolutely necessary for secure deployment and integration of AI. Do it right away, if you haven’t, even if you have already deployed AI.Responsible Integration

Testing this rollout with a specific but varied group of users (different roles and responsibilities, starting with non-admin privileges) with specific use cases and data set was necessary and a key part of the overall equation for responsible AI integration. I share this example in my practical AI strategy and governance template.

Here is the part nobody wants to sit with: 75% of large enterprises already have shadow AI running across their networks. 86% are blind to where their AI data is actually flowing.

Employees are installing autonomous agents with real system access, connecting them to enterprise infrastructure, and then finding out about it the same way everyone else does: after the fact or worse yet after a breach.

In 2014, Amazon built an AI hiring tool to screen engineering candidates at speed. By 2015, the company realised the model was not reviewing resumes in a gender-neutral way.

It hated women. Well not literally, but it learned that from the dataset that did.

It was penalising resumes containing the word "women", downgrading candidates from all-women colleges, and favouring action verbs that male applicants used more frequently. The system had been trained on ten years of resumes from a male-dominated industry. Amazon scrapped it in 2018. Rightly so.

This is not a historical footnote. It is the blueprint for what happens every time an organisation gives AI access to unclassified, unvalidated and biased data, but somehow expects sound decisions to come out the other end.

Data readiness is not about clean data. It is about three things working together: classified, unbiased, and access-controlled.

The moment you give an AI model access to your entire data environment without classifying it first, you hand it access to everything your employees were never supposed to see. The identity and access management framework your team spent years building collapses in a single rollout. Employees who should not have seen confidential data now do. Not because the AI was malicious, but because you did not tell it what it should not touch.

And when training data carries historical bias, as it almost always does, the AI amplifies that bias in every decision it makes. At speed. Without pause.

In practice, start here:

Classify your data before any AI tool gets access to it. Not retroactively. Before. This includes sensitivity levels, access permissions, and data lineage.

Map AI access to owner’s data-based access. If a person cannot see it, the Non-Human Identity (NHI) owned by that person should not either. Your AI does not override your IAM model. It must be governed by it.

Align your AI’s access to your IAM governance and policy. Don’t have one? You need one.

Additionally, enforce least-privilege data access per agent identity, not per user group. An invoice processing agent gets read access to the finance data store only. It does not get access to HR records, customer PII, or source code repositories even if those sit in the same environment.

Run a bias audit on every dataset used for AI decision-making before it goes live. Each must be tested before an agent is permitted to make or influence decisions affecting people, including hiring, lending, fraud detection, healthcare triage, or contract assessment.

Test your training data for bias before deploying AI to any decision workflow, especially hiring, lending, healthcare, or fraud detection or anywhere I makes a decision on your behalf. Bias auditing is not a one-time exercise.

Run a controlled pilot with a defined user group first. The whole organisation is not your test environment. Don’t treat them like one.

Your AI cannot produce trustworthy decisions from untrustworthy data. No model architecture will solve the problem that your “bad” data created.

ICYMI:

Did Mythos Break The Cybersecurity Industry?

😱 The AI model that found 10s of thousands of vulnerabilities is too dangerous to be released. Is this is the end of the cybersecurity industry? Read full story —>

Step 2: Strategy and Leadership

In one of my CISO roles, I was working with my team on a national AI strategy. This was later released and became a key part of the national AI initiative with security, privacy, ethics and resilience as underlying the backbone. Learning from that I implemented AI strategy for organisations in critical infrastructure space. Here’s the biggest pattern I’ve noticed.

Most organisations I work with cannot answer a simple question: where in your business are AI systems currently influencing decisions that affect your business operations, your clients, your regulatory standing or your business outcomes? What exact use cases have you defined that are key for AI to implement and work with? What are the key governance pillars AI will be deployed and implemented on and are they aligned with the overall business objectives?

Last year, I wrote about AI in decision making. A strong AI strategy doesn’t just ask where you plan to use AI, but also where it is operating right now and which business decisions are AI augmenting / replacing.

Especially the ones you don’t know about.

That gap is the problem. Agentic AI is already embedded in M&A due diligence workflows, credit risk modelling, supplier evaluation, and strategic planning tools at enterprise scale. It is not a future scenario. It is today's reality. According to Deloitte's 2026 State of AI in the Enterprise report, while AI agents outpace the necessary deterministic guardrails, only one in five companies has a mature model for governance of autonomous AI agents.

The reason is almost never the model. It is the governance pillars being an afterthought and added after the deployment, if at all.

A hallucination in a customer service chatbot is embarrassing. A hallucination in an M&A financial model or a credit risk assessment is a fiduciary liability. Those are not the same category of failure.

This needs to start with a strong (yet simple) AI strategy, tied with your AI governance pillars that underpins every AI implementation in your organisation.

"We're using AI because everyone else is", the one sentence I've heard in more board and executive meetings than I care to count. It is not an AI strategy. It is a liability statement looking for a context.

Most organisations have AI adoption plans. Almost none have an AI strategy with governance pillars directly attached to it.

Ask your leadership team to answer these questions right now:

What is the purpose of using AI for your business and how will it support your overall business goals?

What is the specific problem you are solving in your business, segment or market?

What are the key expected outcomes of your AI strategy implementation? Be specific and measurable.

What are the key opportunities vs. risks for AI use cases we are working on?

What specific business decisions will AI augment vs. replace?

What happens when it makes wrong decisions?

Who is accountable for that unintended outcome?

If the answers are vague, or the room goes quiet, your AI strategy “just because others are doing it” doesn’t hold.

A real AI strategy must define business cases with measurable outcomes tied to specific decisions, not to general efficiency goals. "Cut costs" is a starting point and not enough.

The NIST AI Risk Management Framework, released in 2023, gives you four functions to structure from: Govern, Map, Measure, and Manage. But none of them start without a sound strategy. ISO/IEC 42001 provides the management system standard. Both are available. But very few have operationalised it.

As my team and I started the AI implementation project, now more than 3 years ago, the first piece of work was alignment on AI strategy. Not 20 pages long. Just 2-3. Here’s a real-world practical AI strategy template I have used to get started.

In practice, start here:

Define AI use cases by business outcome, not by tool. What decision or process is this solving, and what good output looks like?

Map every AI deployment to the business decisions it will influence, replace, or augment before it goes live.

Build an AI use case registry and record every deployed agent in it. You cannot govern what you have not inventoried. Every AI agent running in your enterprise, whether deployed by IT, a business unit, or an individual employee, must be registered with a named owner, its designated business function, the decisions it influences or replaces, and its data access scope.

Run an AI readiness / maturity assessment before any rollout. The five phases I talk about, data, strategy and leadership, culture and literacy, governance and implementation, and controls and engineering, assess readiness and maturity on all five. A Google study found that the real AI maturity isn’t just infrastructure but governance maturity is the strongest indicator.

Attach governance pillars to your strategy from the beginning. If governance pillars are not a part of your strategy, it will not be funded. If it won’t be funded, it won’t be executed. Without execution, there is no trusted AI. Those governance pillars are key. Check my next step of the roadmap for more info on governance pillars and implementation.

Assess risks and opportunities together. Ethics, bias, discrimination, and explainability sit next to efficiency and cost, not after them. Use my free AI risk assessment template to get started.

Define your AI risk tolerance explicitly at board level, before use cases are approved. Risk tolerance for agentic AI is not the same as your existing enterprise risk tolerance.

An AI agent making probabilistic decisions introduces a new class of risk: irreversibility, bias amplification, and autonomous action at scale.

Strategy without governance and implementation is just paperwork. And paperwork never stopped a breach.

We will go deeper into agentic AI governance, controls and engineering in the next steps of the roadmap.

Part 2 of this series coming out soon, where I’ll cover the rest of the maturity model and roadmap, and more, along with a bonus for you.

Until next time, this is Monica, signing off!

— Monica Verma

P.S. Please follow me/subscribe on Youtube, Linkedin, Spotify and Apple. It truly helps. Or book a 1-1 advisory call, if I can help you.

***