The Predictability Factor is a weekly deep dive at the intersection of AI, Security, Privacy and Tech, to help you Go From Chaos to Resilience in The World of AI.

Let’s look at three different data points.

A one-person team being valued at billions of dollars isn’t just theory anymore. Peter Steinberger, the guy from Austria that built OpenClaw (formerly known as ClaudeBot and then MoltBot), showcased the world that a one person team with $1 billion evaluation is absolutely possible. He was given billion-dollar bids by both OpenAI and Meta. He accepted the offer from OpenAI and joined their team.

That same month, Dario Amodei stood in Davos and said that AI could replace software engineers in 6 to 12 months. According to Amodei, there will be no more need for software engineer roles. According to him, in fact, the software engineers role will change primarily to a PM or a builder.

I don't write any code anymore. I just let the model write the code. I edit it.

Matt Shumer just came out with his extremely scary take on how AI is going to replace your role in any and every industry.

Three data points. One very uncomfortable question. How and where is AI changing your role and enterprise?

In 2025, I recorded this video on 7 key shifts in AI that I was seeing then, and the ones I believed would change your career and your business in this era of AI. Today, I know that to be true.

In this blog, I’ll break down each of those 7 major AI shifts. For each, I share my detailed PoV and my updated 2026 prediction (compared to my 2025 prediction), so you can see the direct correlation, the transition that is happening, and which areas are still promising to skill up.

The State in 2026

Companies have been replacing entire teams with AI. For others, the roles are shifting quite a lot and very fast.

I’ll be brutally honest with you. The world is freaking out. At least that's what I see. Everyone is asking: ‘When will AI take my job?’ Here’s my take on it.

Before AI will take your job, your fear of ‘AI taking your job’ will take your job.

Read that again.

The “AI replacing you” scenario may happen in months or years. But that fear is here. It is in the now.

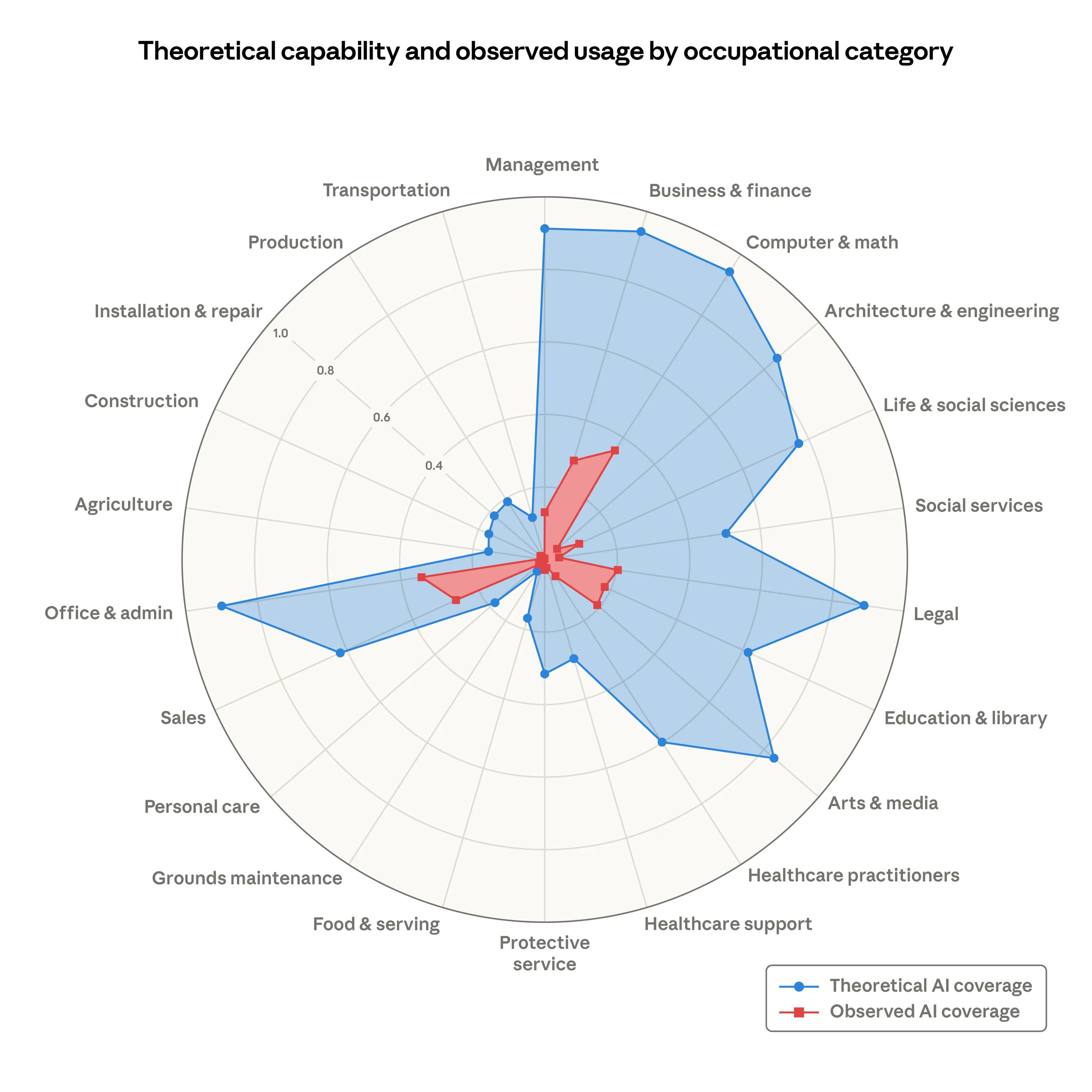

Recently, Anthropic released the research paper on what roles will AI replace, to what extent and where the margin is today.

There are two ways to look at this analysis:

While we grew up believing that jobs in industries like computer science, tech, legal, et cetera will be the safest and surest jobs, AI has entirely changed that. These very jobs that seemed the safest will be the first ones to be displaced in different ways.

However, the other way to look at it, which is the good news, we are not there yet. According to the research, the observed coverage is still much lower than the theoretical capability and coverage with AI. That means you have leverage. You may not have much time, but you definitely have the leverage right now, given you know how to leverage AI both in your personal and profession lives, in a way that's reliable, trustworthy and secure.

Let’s dig in.

7 Major AI Shifts That Have Already Changed Your Job

TLDR:

1. AI-powered personalisation goes mainstream

My 2025 Prediction: Hyper personalisation is the future of customer experiences, not matter which industry. It's also true for cyber, especially for cyber.

My 2026 Update: LLM agents and agentic multimodal AI continue to drive hyper personalisation. Not just at personal level but at enterprise level. That's where the big bucks are.

OpenClaw, the open-source local AI agent that hit more than 100K GitHub stars within the first 7 days, created by one developer in Austria, modified and implemented by tens and hundreds of thousands across the world, just proved how personal AI-powered personalisation has gone massive and continues to win in 2026. It achieves this hyper personalisation depth through three primary technical measures:

Persistent local memory: So unlike ChatGPT or Claude which often forgets specific details between threads or different sessions, OpenClaw stores your context preferences, project structures and past conversations 24/7 across sessions to build a long-term memory that makes every interaction more tailored than the last. You can do that in Claude for projects but still not cross-sessions.

Contextual reasoning across silos: OpenClaw connects your actual life data like emails, calendars, GitHub, Notion, Slack, WhatsApp, you name it. It doesn't just know you, it knows what you're working on right now you can distinguish between a work email and a personally based on your historical behaviour, not just keywords. Claude is also catching up fast on that with it’s latest marketplace.

The markdown framework: With soul.md you define your assistance’s personality e.g., concise versus verbose, rational versus creative and with user.md you feed it a dossier about yourself. Overtime, OpenClaw auto updates this file with your optimisation goals, you prefer preferred flight seats, your coding style, etc. In Claude Code and others, I have fed the AI models, my unique voice and DNA of who I am through different .md files. Without that personalisation your AI is lacking all the context.

Context is king. This is especially crucial in cybersecurity, which we will explore in the next section.

On the flip side, what happens when this level of personalisation is running inside your security operations, your vendor risk workflows, or your financial decision models?

For example, when AI is buying stuff for you, on your behalf, you need to be able to verify what actions it took were a part of the approved instruction set vs. where AI decided to go outside the set of parameters and start taking actions without explicit human (your) approval.

We will see the need for verifiable AI identities and instructions. That level of personalisation (in business) requires explicit and verifiable intent.

The moment personalisation moves from "what to show you" to "what actions to take for you or on your behalf," it becomes an infrastructure that can fail with massive consequences and requires explicit and verifiable intent.

2. The battle for high-quality data and context intensifies

My 2025 Prediction: Most data out there needs some serious cleaning up. Erroneous, inaccurate, incomplete and / or biased data are all examples of bad quality data and will only lead to unreliable, inaccurate and biased outcomes.

My 2026 Update: I’m still dealing with companies that have jumped in head-first to deploy AI models or AI tools without any data classification or data governance in place. Do you know:

What data is your AI agent reading right now?

Who approved that access?

How do you control data leakage through a LLM?

Who controls and governs the data infrastructure?

How have you implemented the controls for data security?

What data and decision workflows is your AI agent now actively managing?

How biased, discriminatory, inaccurate and erroneous is the data that your model has been trained on?

Your AI is only as reliable as the data you have been neglecting for the past decade or more. I see that with so many companies. It is not a technology problem. It is a governance problem that was always there, and is still there, but now running at AI speed, and you are not able to catch up.

In January 2026, the World Economic Forum named data readiness as the defining strategic challenge for enterprise AI, ahead of model selection, compute, and talent. Not because data is more technically interesting than a frontier model, but because without clean, governed, and auditable data, the frontier model is just an expensive guesswork that’s just predicting the next token without any context.

Contextual data is key. Not the model, not the compute. The context that the model has access to. Most organisations are still trying to pick the right AI tool while sitting on data too ungoverned for any tool to reason with properly.

Here is a great example of that. Claude Code Security did not shake the appsec market by being a smarter scanner. It shook it because it reasons about your code with context: Understanding how components interact, tracing how data moves through your application, catching vulnerabilities that rule-based tools structurally cannot see.

That context in cybersecurity with regards to AI means moving from generic security rules to where AI actually understands your specific environment better than you do and it can bring the context to you.

It doesn't mean that AI powered code security tools like Claude Code security will just replace cyber security vendors. Nor will it solve entire appsec with the way it stands now. More on that in the next section.

The gap that is missing is the context. That gap is yours to own.

ICYMI:

When Critical Systems Hallucinate

Your Vibe-Coded Org Got Breached, 7 Million Bedrooms Turned into Training Data, Anthropic is Going to Court and AI Ethics. Read full story —>

3. Not every problem is an AI problem (until it is)

My 2025 Prediction: AI is not new. But we are using AI like never before. Despite that, AI in not a rainmaker and we aren't reaching AGI in the next months.

My 2026 Update: AI is still not new. But here's what shifted in 2026. Today, it's not just about AI that says things, it's about AI agents that actually do things. OpenClaw is a fantastic example of that. OpenClaw’s slogan is “The AI that actually does things”. API calls and skills are everywhere, especially the malicious ones.

The current state of “perception of AI” is binary.

On one hand are those who are in complete denial of AI changing every aspect of your enterprise. There are still many organisations that have blocked any and all use of AI for their employees. What they don't understand is that reality is different. In reality, they have not really blocked what they think they have blocked.

On the other hand, we have people that are extremely impressed with what happened with MoltBook and how AI agents created their own social network, talking to each other, talking about taking over humanity and what not, believing this is already AGI. Turns out the platform had unsecured Supabase credentials, making it trivially easy for humans to impersonate AI agents and post alarming content. It’s likely the most alarming AI behaviour people attributed to agents was a person doing it. And just recently, MoltBook has been acquired by Meta, which seems so fitting for Zuckerberg. These people in the second camp believe that those AI agents have already reached the level of AGI.

You have to remember that AI is still predicting the next token. This isn't AGI.

Almost every problem today becomes an AI problem, even when it is not, and that's the real issue. What both camps are missing is the actual inflection. While AI is acting, booking meetings, querying databases, executing code, applying patches, that very transition poses a big risk.

That transition from ‘saying’ to ‘doing’ changes the threat surface, the governance requirements, and the board accountability question.

I said it in 2025 and I'm saying it again, despite this major change in how AI agents are being integrated across (orchestrated) workflows, we aren't reaching AGI in the next months. Plus, you're asking the wrong question.

The real question is whether you’ll be ready for when that happens? Do you have the necessary guardrails and measures in place before AI reaches the state of superintelligence?

On our current trajectory, we believe we may be only a couple of years away from early versions of true superintelligence. If we are right, by the end of 2028 more of the world's intellectual capacity could reside inside of data centres than outside of them. Sharing control means accepting that some things are going to go wrong in exchange for not having one thing go mega wrong, cemented totalitarian control.

There is a deeper message around his statement "…sharing control means accepting that some things are going to go wrong“. Building a secure digital future will require more than just technical solutions and definitely more than AI.

Most of the key solutions to some of the world’s key biggest problems will still be a combination of AI and humans.

Take the example of vulnerability management. The biggest things that I'm missing with AI agents on the defence side are:

The deterministic controls throughout the workflow

The testing, validation and feedback loop

Repeatable and validated outcomes, every time and without continuous human supervision

The question isn’t just about whether AI can scan, reason and patch vulnerabilities. Currently, where we stand, the model can find the vulnerability and seemingly patch it. But you still need the human in the loop.

Validated findings appear in the Claude Code Security dashboard, where teams can review them, inspect the suggested patches, and approve fixes. Because these issues often involve nuances that are difficult to assess from source code alone, Claude also provides a confidence rating for each finding.

This is the case with so many AI use cases in cybersecurity. As long as that is the case, AI alone will not be the solution. Not saying this will never change, but not right away. A frontier AI model will not replace all cybersecurity vendors overnight.

The real insight is how AI takes care of the rest of the vulnerability management process i.e. validation, accountability, repeatability and robustness, which means knowing when those patches are applied, how was it validated and how is it handled at scale?

4. AI is your decision-making copilot

My 2025 Prediction: AI is like a brainy sidekick, your own personalised Einstein. It’s your advisor or your new decision making buddy. I don't just mean ChatGPT or Claude. I am talking about AI influencing and even making decisions on your behalf.

My 2026 Update: AI continues to influence and make decisions on your behalf e.g. influencing your dating life, whether your credit loan is accepted or denied, your next fashion haul, etc. This integration of AI in decision making keeps on getting bigger by the minute, especially in enterprises and businesses.

Last year in Toronto in 2025, Elias Sutskever gave a Keynote on AI and he basically said that AI will be soon able to do all the tasks that humans are able to do. He doesn't know exactly when, he doesn't know how much time it will take but according to him it will happen soon that AI will be able to do all the tasks that humans are able to do. Dario Amodei warned recently that AI will soon make complex decisions at superhuman speed in finance and defence.

No one can really predict the future with 100% probability. What we do know is that AI is moving towards managing “logic” and “reasoning” at global scale.

I've been talking to a lot of different organisations in different sectors, particularly finance, investment, auditing and tech. They are integrating AI more and more into business workflows and decisions. A real example, that I was recently advising on, is for a big auditing firm that is the integrating AI into auditing workflows. The efficiency case is real. The accountability question, however, is nuanced and complex: When the AI flags a material weakness in a client's financial statement, who validates and signs-off on the audit opinion?

The challenge for 2026 is not only managing the speed of these choices but who is ultimately accountable and to what level and degree is there a need for human oversight, especially when things go wrong? The next question is exactly how do we do that human oversight?

People confuse and misunderstand human oversight terribly. There is a massive difference between human in the loop for actions versus human in the loop for critical decision-making and accountability.

While you may want seamless orchestrated workflows that don't require a human to approve every step, every action still needs to be verifiable and every consequence of those actions needs to be held accountable by a human, even if carried out by an AI agent. Once you understand that distinction, you start integrating human in the loop differently.

Every organisation will need to map their critical business decision workflows and how these business decision data points and workflows run the overall business operations. Without that, organisations will be running and operating their business with AI, but in blind mode.

5. Explainable AI takes the centre stage

My 2025 Prediction: As AI starts making your decisions, you need to know how it came to that decision. Enter explainable AI, which is also going to be big for legal reasons.

My 2026 Update: Explainable AI needs to be at the centre stage, not just for legal reasons but also for security, privacy and humanity.

With Anthropic being labeled as “supply chain risk”, I cannot imagine stronger reasons of why ‘Explainable AI’ matters. What pentagon versus Anthropic revealed is much more deeper than just AI ethics.

Most companies like Lockheed Martin, Palantir, shield AI etc. have been using machine learning for ages now in the defence systems for different actions. What has changed now drastically is that these systems are now using large language models (LLMs) as their reasoning layer and those large language models hallucinate, can be highly inaccurate, can produce different outputs for the same input, carry their own risks and are probabilistic in nature. I did a deep-dive on this issue here.

Here's the thing.

You may not use AI for those very reasons. But you very well need to know your AI’s entire Chain of Thought, why it is behaving the way it is “behaving” and what paths led it to come to the conclusion, decision or action it did.

Without explainability, there is no accountability.

Is your AI model being coerced to give you false information or hide certain information from you or outright lie to you as a part of surveillance?

Without Explainability, there is no technical way to prove that a surveillance target or a "kill chain" decision was indeed lawful and not a result of algorithmic bias or a "hallucination”, and that’s precisely why we need more transparency and explainability with every single AI model out there.

“The model did it”, isn’t going to be an acceptable explanation to waive your accountability to a regulator or in court when the AI makes the wrong decision.

Read that again.

What explainability requires is the evidence of review and decision making: decision logs, human-in-the-loop checkpoints, audit trails that show a human reviewed the output before the action was taken. "The model decided" is not an explanation. It is the opening line of a liability.

The AI running your enterprise tools and the AI making targeting decisions in defence systems are using the same reasoning models. Same hallucination risk. Same explainability gap. That gap needs to be bridged.

6. AI is generating synthetic data (not just fake but synthetic)

2025 Prediction: Think of synthetic data like a really good fake plant. It looks real, feels real, but you don't need to water it. It can solve a lot of privacy and data protection issues, but again, remember garbage-in-garbage-out, so it needs to be high quality.

2026 Update: Your AI vendor cannot tell you what percentage of their training data is synthetic. Ask them. See what happens.

That is not a hypothetical gap. It is the state of the market right now. Privacy regulations, data scarcity, and the economics of annotation costs are pushing banks, healthcare institutions, and various firms to train on AI-generated data rather than real records.

The World Economic Forum named synthetic data governance a strategic priority, specifically because the risks are real and they are not being managed.

Here is what those risks look like in practice. If the data used to generate your synthetic training set carried bias, the synthetic data amplifies it. Even privacy-preserving may leak data if done incorrectly. Every downstream generation amplifies it further. Researchers at the University of Oxford published evidence of this and named it model collapse: AI trained iteratively on its own outputs progressively loses diversity, forgets edge cases, and produces confidently wrong results at scale. Not occasional hallucinations. Structural drift. The model does not know it is degrading. Neither does your the organisation that’s using it.

The challenge, as this continues over the next years, is that we will see data dilution. The very AI models that train on large datasets, will generate synthetic data to train further on the data that it generated, causing further degradation and dilution.

AI generated synthetic data is here to stay and will only get bigger and more prominent. There’ll be more synthetic data than real data in the AI models in the coming years.

I have asked this question in dozens of executive and C-level conversations: Who in your organisation is accountable for what the training data was built from, how many generations back, and whether the source data was tagged as synthetic before it entered the pipeline?

I am still waiting for an answer.

That is not a model problem. It is a supply chain problem. I don’t know of specific AI governance frameworks being used in the organisations that were designed with this type of data-supply chains in mind. Maybe you do? I want to hear from you.

7. There is no responsible AI without you

My 2025 Prediction: In September 2024, two Harvard students used AI (LLMs) and smart glasses to extract all your personal details automatically, just as they passed by you, including your name, occupation, home address and other personally identifiable information about you. What data you put out is not yours anymore. While AI makes it scarily easy, the real issue is not the technical feasibility, but the ethics and responsibility around it.

2026 Update: Who's keeping all this AI stuff safe, ethical and responsible?

You’ve already read it by now. Meta Ray-Ban glasses are sending your entire footage to human contractors at a data annotation firm called Sama, in Nairobi, Kenya. Those contractors are watching you regularly including everything from users going to the toilet, getting undressed, revealing bank card details captured by “mistake”, having private conversations, or exchanging intimate moments, including what you never consented to, let alone being labeled for training AI models.

While Meta markets the Ray-Ban glasses as “built with your privacy in mind.", you know it better than anyone else. Your data was never yours and those “Terms of Service” tells a completely different story. Meta has turned 7 million users' private and professionals lives into AI training data.

On the other hand, as AI is getting deeply woven into both your personal and professional lives, I see organisations, their developers, their employees, their engineers and even their CEOs taking a complete YOLO (you only live once) approach to AI ethics and security.

Massive examples of people “vibe-coding” their entire start-ups, their organisations, their enterprise apps, and then wondering how they got exploited.

Where is the damn accountability?

For decades, organisations have treated security and privacy as opposites.

Just because Meta said "built with privacy in mind" means nothing if it's not enforced. Your AI governance, AI ethics and responsible AI means nothing, if it's not enforced.

In all my CISO roles, I have led security and privacy as complementary functions to build a stronger, more resilient and privacy-friendly organisation and business, and not treat them as opposing forces.

Agentic AI security controls and security first-principles are one of the key ways to enforce privacy and data protection, especially when even legal agreements fall apart, despite everyone swearing to uphold them. If your AI governance, ethics and security isn't enforced through controls (not just some paperwork), it doesn't exist. Period.

Ask yourself:

- Am I "prompting" guardrails or actually integrating them?

- Is this AI/AR going to capture my data and exactly what data?

- Is my data going to be used for training AI models and where?

Security, ethics, safety, transparency, explainability, auditability and privacy are all key to Responsible AI. They are complementary and they need to come together to build responsible and trustworthy AI.

The Conclusion

The world is freaking out. Everyone is asking: “When will AI take my job?” Here’s my take on it.

Before AI will take your job, your fear of “AI taking your job” will take your job.

Read that again.

The “AI replacing you” scenario may happen in months or years. But that fear is here. It is in the now.

The above seven shifts are not just happening, they have already happened and they've already changed your job, your career and your organisation. This is not to scare you. This is to show you how you can leverage these shifts to make your role even more impactful despite the changing wind.

My Hot Take: With each and everyone of those above 7 shifts, I see massive opportunities in specific roles to upgrade your personal and professional life in cybersecurity, data protection, privacy, audit, etc.

The key skills engineers, coders and cyber professionals will bring isn't stitching together auto-completed code. It's systems thinking, critical reasoning and analytical mindset for delivering value through AI.

Ask yourself this one question:

How would your career and business change positively, if you could use and apply one of these shifts to your career and organisation, and which one would that be?

I'm working on many of them, if not all.

P.S. You can re-listen to 2025 (last year’s) predictions here, to compare how drastically things have evolved in AI, in the same categories:

Until next time, this is Monica, signing off!

— Monica Verma

P.S. Please follow me/subscribe on Youtube, Linkedin, Spotify and Apple. It truly helps. Or book a 1-1 advisory call, if I can help you.

***