March 16, 2026, Jensen Huang walked onto the stage in San Jose to give his keynote saying how every company needs their “OpenClaw strategy”. 1 week earlier, CodeWall released the report on how they hacked McKinsey’s internal AI platform in less than 2 hours just by pointing their autonomous AI to the target domain without any human in the loop. A few days before that Atlassian announced 1,600 layoffs and told the market AI changed the skills it needed. And right this very second, somewhere in your enterprise, an AI agent is making a decision your board never discussed, your legal team never reviewed and your business never understood or managed the underlying risk.

These may sound like four separate stories. It is one told from four different angles. It’s a story about the the troubling reality of enterprise AI security and privacy.

There are 2 types of organisations today.

First those that are running insecure agentic AI in their enterprise infrastructure leaking sensitive data, and the second those that just don’t know about it.

Agentic AI is already integrated within your enterprise and making million dollar decisions, whether or not you have a strategy for security, privacy, or governance or the controls to implement it.

But here’s a big shift that most companies don’t yet understand.

We need to be building for machines, not just for humans. There is a hopeful future where machines do all the grind work and we as humans provide context, our experiences, boundaries, our purpose and ideas to be executed. Which means, in this world where we build for AI agents, security, privacy and governance will need to be built for and integrated into what’s built for machines, and not something that just breaks UX.

Today’s edition of The Predictability Factor by Monica Talks Cyber, covers:

Quick Updates

Update 1: 🫣 I have been traveling across Europe for keynotes/advisory. This newsletter was mostly written in Finland during my travels. I held the main keynote there for a Nordic CIO and Cybersecurity Executive Conference, on the topic of Agentic AI Making Million Dollar Decisions. I am doing a full write-up on it, as you are reading this. I’ll share that with you soon, along with the keynote video, soon to be published on my youtube.com/monicatalkscyber channel.

Update 2: One of the many reasons, I started The Predictability Factor, because…

While we need AI to defend against the darker side of AI, we need some level of predictability fused with structured data that’s governed by deterministic controls. Also, while we don’t want humans clicking every AI action (the human-in-the-loop bit), we will continue to need human-oversight. They are not the same. I explain below in the newsletter.

On a very high-level, this is how I am currently thinking about this architecture for agentic AI security and verifiability, and how it might be implemented (it’s a rough draft, a high-level architecture overview, and likely missing a lot of elements, as I’ll share below in the newsletter):

Hybrid Agentic AI Security Architecture (Loop):

Verify → Interpret → Structure → Enforce → Audit → Verify (See diagram below)

Verify (Pre-Runtime): Integrity checks, based on hashes, signatures, etc. across the supply chain, model version pinning, MCP server vetting and signing, dependency and configuration scanning and context integrity check including document sources verified against trusted baselines before the session begins. Particularly important at this stage will be agent identity verification (re. all NHI issues) along with ephemeral credentials to NHI.

Interpret (Runtime): The agent uses its LLM capabilities to understand unstructured inputs (emails, chat, documents), adding some form of input sanitisation (which is hard).

Structure (Pre-Runtime and Runtime): The agent converts this understanding into structured data (JSON, code, structured database signals). Tools are coded with deterministic outputs that the LLM then can chose from to carry out actions. Action space is bounded by design along with agents running within isolated execution sandboxes.

Enforce (Runtime): A deterministic "guardrail" layer that checks this output (from the LLM) against policies. If compliant, the action is taken; if not, it is blocked or sent for human review, along with logged reasoning traces. Additionally, mandatory human oversight and rule-based cross-check for irreversible decisions.

Audit (Continuous): Immutable logs traces capture every reasoning and every action, the structured output that produced it, the policy rule that evaluated it, and the human identity accountable for it. Additionally, the feedback loop feeds into a digital twin for pre- and post-testing agentic actions in an isolated clone of a production environment.

This is a high-level and oversimplified version, and I would love to hear from you, where it would work and where it wouldn’t? It still wouldn’t address all prompt injection attacks. What other key stages are missing? I’ll continue refining it. If you have thoughts, reply here.

Building Security and Privacy in Agentic AI

OpenClaw, built by one Austrian developer Peter Steinberger, valued at billion of dollars is one of the fastest-growing repositories in GitHub history. Even more interesting isn’t just what he built, but what happened after it went viral. By mid-February, OpenAI hired Steinberger (allegedly with offer around billions of dollars) and took the project in-house as an open-source platform. On March 16, Jensen Huang stood on a stage in San Jose calling it the operating system for personal AI and comparing its arrival to Linux, Kubernetes, and HTML.

That comparison was not just marketing. It was a category declaration. This is a fundamental shift not just for personal AI agents but for agentic AI in the enterprises.

OpenClaw opened the next frontier of AI to everyone and became the fastest-growing open source project in history. Mac and Windows are the operating systems for the personal computer. OpenClaw is the operating system for personal AI.

But, as you know, OpenClaw has had immediate security implications for every enterprise in the room.

OpenClaw, is an autonomous agent with real-system access that takes actions, not just answers. It organises files. It writes and executes code. It browses the web. It completes multi-step tasks without routing data through the cloud, running instead on local hardware, with access to your systems, your files, and your workflows.

While it’s autonomous, it’s also a living and active security and privacy nightmare.

No external guardrails. No deterministic controls by default. Most people have installed OpenClaw on their laptops connected it to their enterprise infrastructure, given it real-system access not just to personal stuff but to their enterprise network, because there’s no security configuration at the network level blocking it or sandboxing it or securing it in any form, and your laptop happens to be connected to the enterprise network.

Just an agent with access to both the operating on your machine and integrated to your company infrastructure.

That power is also the problem.

OpenClaw's original architecture has well-documented vulnerabilities: prompt injection (true for all LLMs), unconstrained file access, root permissions, and the ability to compromise a device remotely (as most instances have been connected to a reverse proxy exposing your localhost through the Internet). Most of these flaws were found and reported within days of launch. Yet, 50000+ instances are still exposed.

The same openness that makes it viral makes it dangerous at an enterprise scale. We don't just need AI that acts, we need one that can do things reliably, safely, securely, ethically and verifiably.

NVIDIA Building Security and Privacy in Agentic AI

Nvidia's response arrived at GTC on March 16. NemoClaw is a stack that installs onto OpenClaw in a single command. But the bigger news isn’t NemoClaw.

It’s OpenShell.

OpenShell is an open-source runtime that sits between your AI agent and your infrastructure. It does not modify how the model thinks or what it generates. It governs what the agent can do, what it can see, and where its data can go, enforcing those constraints at the infrastructure layer rather than the application layer.

I said it earlier, prompting is not equal to security or guardrails. We need deterministic controls both at the infrastructure layer and across the entire Agentic AI’s workflow chain.

What do I mean by that?

More security breaches will happen because of infiltration and compromise across the supply-chain of an AI agent workflow. |

Think about it like this: you have an AI agent, now it's upgraded but during the upgrade it installs multiple packages, it pulls in different resources and when any part of that is compromised, you bring all those vulnerabilities in with you and the compromised package with you into an agent and an environment that you trust. While you trust it, it’s already compromised. It’s like the SolarWinds attack but for AI agents and their supply chain.

In your mind that trust equation doesn't change, while your supply chain has been already been compromised.

That distinction matters. The previous approach to agent security was essentially asking the agent to police itself, trusting the model's own code to decide what it could and could not access. That's not security. That's a wish list. Fully story below.

ICYMI:

AI Security and Privacy Nightmare Crept In. NVIDIA Stepped Up.

🤯 OpenClaw, NemoClaw, OpenShell...? NVIDIA just released its answers to one of the key problems we have with security and privacy around agentic and autonomous AI, especially for enterprises.

Read full story —>

The Reality of The AI Market

AI didn’t kill your job. Before AI kills your job, your fear of AI killing your job will kill your job.

Companies have been replacing entire teams with AI. For others, the roles are shifting quite a lot and very fast.

I’ll be brutally honest with you. The world is freaking out. At least that's what I see. Everyone is asking: ‘When will AI take my job?’ Here’s my take on it.

Before AI will take your job, your fear of ‘AI taking your job’ will take your job.

Read that again.

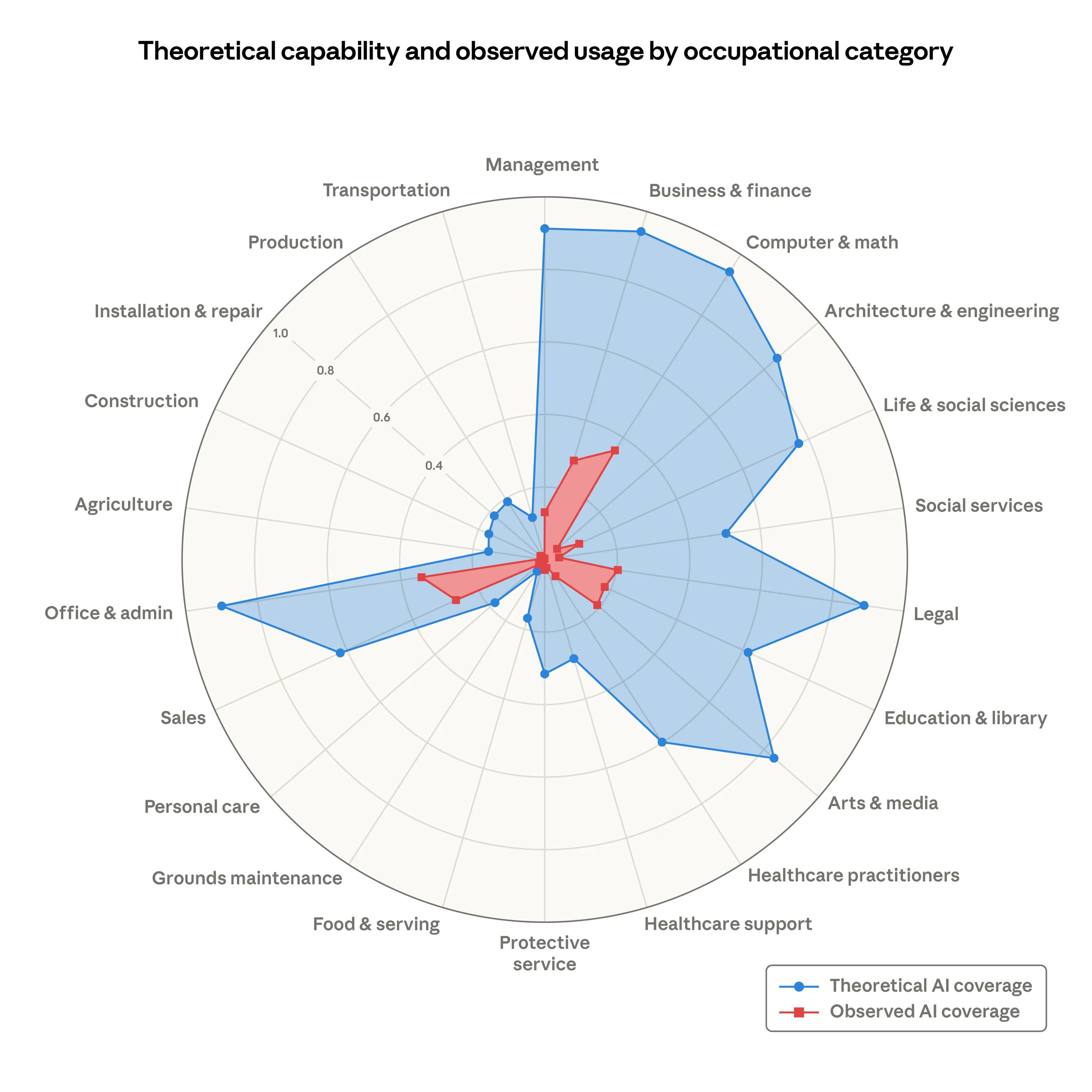

The latest research from Anthropic made that very clear. Recently, Anthropic released the research paper on what roles will AI replace, to what extent and where the margin is today.

There are two ways to look at this analysis:

While we grew up believing that jobs in industries like computer science, tech, legal, et cetera will be the safest and surest jobs, AI has entirely changed that. These very jobs that seemed the safest will be the first ones to be displaced in different ways.

However, the other way to look at it, which is the good news, we are not there yet. According to the research, the observed coverage is still much lower than the theoretical capability and coverage with AI. That means you have leverage. You may not have much time, but you definitely have the leverage right now, given you know how to leverage AI both in your personal and profession lives, in a way that's reliable, trustworthy and secure.

Here’s a hard reality. Three different data points.

Peter Steinberger, one developer in Austria, built OpenClaw in roughly an hour. OpenAI and Meta both bid billions to acquire it. He accepted OpenAI's offer.

That same month, Dario Amodei stood in Davos and said AI could replace software engineers in six to twelve months.

Recently, Matt Shumer published an essay that has since been viewed 85 million times warning that AI will replace your role in any and every industry.

One uncomfortable question. Where does human lie in all of this?

The real shift that is happening is not AI replacing humans. It eventually will, for things like creating, It is AI moving from saying to doing.

OpenClaw does not answer questions. It takes actions. It books meetings, queries databases, executes code. That transition from saying to doing changes the threat surface, the governance requirements, and the board accountability question in ways most organisations have not begun to map.

I recently asked a room full of more than 700 C-level executives (CISOs, CIOs and CROs), who has mapped their business decision points, and if they knew which business decision workflows were being augmented or replaced by Agentic AI. One hand went up. One. In the entire room.

In this era of AI, I see 7 key AI shifts happening right now that can gear you for what’s to come and how to leverage AI for your career and business.

7 Major AI Shifts That Have Already Changed Your Job

TLDR:

Click on the topics above or read the full blog here.

People confuse and misunderstand human oversight terribly. There is a massive noise in our industry on having Human-in-the-Loop vs. not. That’s the wrong question.

There is a massive difference between human in the loop for actions versus human in the loop for critical decision-making and accountability. While you may want seamless orchestrated workflows that do not require a human to approve every step, every action still needs to be verifiable and every consequence of those actions needs to be held accountable by a human, even if carried out by an AI agent.

Once you understand that distinction, you start integrating human oversight very differently.

And then there is the question everyone in the C-suite needs to be asking: Who in your organisation is accountable for what the training data was built from, how many generations back, and whether the source data was tagged as synthetic before it entered the pipeline?

I am still waiting for an answer. I often say this in my keynotes.

Where does the buck stop? With the robots? With the AI agents? Then it stops nowhere.

ICYMI:

A Billion-Dollar Offer and Seven AI Shifts That Changed Your Job

A one-person team with billion dollar valuation is already here. That's the power of AI, whether you like it or not. How can you leverage that for your career and business? Read full story —>

Autonomous AI Hacked McKinsey’s AI

Companies will be hacked through personal autonomous agents. I gave this keynote last year, in which I talked about how very soon someone’s personal AI agent will autonomously attack someone else’s autonomous AI agent, without any of them knowing about it.

These autonomous AI agents may be personal, but in many cases, they’ll be connected to enterprise infrastructure, so that autonomous AI to autonomous AI attack’s repercussion isn’t limited to just personal infrastructure but can and will extend beyond to enterprises.

It’s not just AI vs. AI, it’s autonomous AI vs. autonomous AI, blurring the boundaries between personal and enterprise infrastructures.

Here’s an even uncomfortable truth about the world of AI. Despite autonomous AI vs. autonomous AI, the boring security fixes will still dominate building resilience for a while. Codewall’s AI hack just proved it.

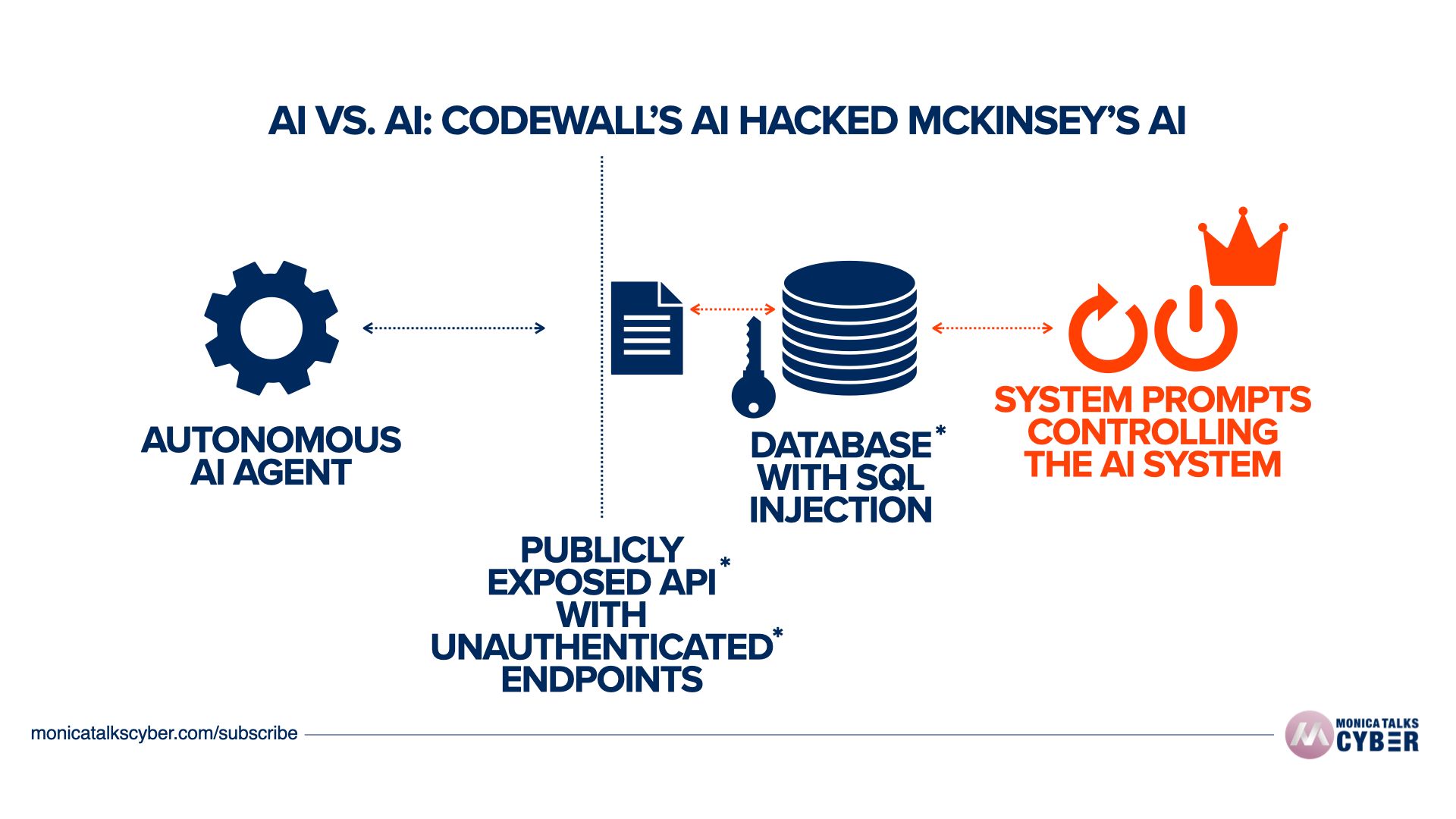

You’ve likely already heard about how McKinsey’s own AI system got hacked recently. This was an AI agent that hacked McKinsey’s AI agent.

I've been saying this for a while. While we will be seeing

Attacks using AI

Attacks against AI systems and

AI going rogue

More and more of that will shift towards AI attacking AI and more importantly autonomous AI such as one autonomous personal AI agent attacking another autonomous AI.

The fourth category is an underying one.

But with McKinsey’s AI hack, this is not even the deeper story. It is not just about AI agents are now hacking AI systems.

If you check exactly what happened in this attack against McKinsey’s AI system, all the vulnerabilities that were found and exploited are traditional security mistakes. Not a zero day. Not a fancy attack at the model-level.

It was basically exposed API, unauthenticated endpoints and lack of data validation against SQL injection. It was various end points that did not have any authentication or protection against unauthorised access. Here’s a oversimplified drawing for illustration. But it showcases a clear message: Even with AI basic cybersecurity hygiene is no. 1.

So yes the AI agent basically made the job faster, automated and somewhat autonomous. But it still did what a human hacker would have done against traditional cybersecurity issues and failures.

And both of these are true at the same time:

On one hand, we will see more and more autonomous AI hacking autonomous AI. There is no denying that.

On the other, even so the majority of these attacks will be because of traditional cybersecurity failures or lack of basic cyber security hygiene. Not just because the model hallucinated, not because AI went rogue and not because a zero day was found in the LLM. Surely those cases will also happen, but they will not be the majority.

What's even interesting though is when such a cyberattack now happens on an AI system or a group of AI agents, the question really is what is the repercussion or the result of that attack?

In this particular case, it didn't only lead to an unauthenticated access but also leaked internal system prompts that were controlling the AI system from McKinsey.

Getting access to the system AI prompts is more critical than anything else. Because this gives you the insight into how that AI system works and how it can be controlled even further. This is like giving the reigns and the keys to the most crucial parts and vaults of the kingdom. This is your new crown jewel.

If you can access the system prompts, you can potentially alter entire AI behaviour, the action it takes, remove any guardrails that have been prompted in, without access to the code. That's also why I have been saying that prompting is not security.

So while you do need to worry about prompt injections, adversarial machine learning, AI going rogue and hallucinations, you first need to fix traditional security vulnerabilities and issues like broken authentication, lack of authorisation, insecure API configuration, SQL injection vulnerabilities, weak access management and overall lack of basic cyber security hygiene, that's been validated and tested over and over.

AI-Powered? More like Fraud-as-a-Service

According to an anonymous investigative post on Substack, Delve has been seemingly faking its compliance and audit for their customers and defrauding both their customers, and their customers’ customers. Delve pulled out of RSAC 2026 conference last moment.

Delve’s (some of them now former) clients pooled resources to investigate what was happening after a series of fishy incidents. As per their investigation, there is damning evidence that Delve has been “issuing” fraudulent, worthless and likely invalid compliance attestations for their clients.

[Delve] achieves its claim of being the fastest platform by producing fake evidence, generating auditor conclusions on behalf of certification mills that rubber stamp reports, and skipping major framework requirements while telling clients they have achieved 100% compliance.

If I were to ever read 100% compliance, I’d be shocked and extremely curious. In all my years as a CSO, not once we had 0 recommendations. Being compliant is one thing. Having 0 issues or 0 improvements is just simply impossible.

Delve helps 1500+ companies comply with SOC 2, HIPAA, ISO 27001, GDPR, PCI DSS, and dozens more in 4x less time.

There are three major problems when something like this happens:

It's not just the vendor responsibility who is helping you get compliant. You as a company and as an organisation have the full liability and accountability of ensuring that your compliance is in good standing. That liability cannot be pushed to the vendor, especially if you're using this to close deals with your clients, which is exactly what some of the prominent Delve’s clients have done.

The whole idea behind fast compliance has been broken for a while. If you are truly trying to get compliant, there is no shortcut. This is one of the things I teach in my security leadership masterclass, where I literally show my students the entire “annual security wheel” incorporating all the different activities that CISO does throughout a year, including the aspects of getting prepared for an audit. That work is done throughout the year. There is an entire annual cycle which includes work through every quarter, every month throughout the year for that one or two times a the year when the actual external audit happens. That is continuous compliance. It just doesn't happen one time in the year.

This is exactly why segregation of duties have always mattered through every role. There is a reason why I rejected every single offer for a CISO role, that either reported to the CTO or the CIO. Firstly, I must admit I had the privilege to say no. But given that I had the privilege, despite a lucrative package, one of the reasons I rejected those offers over and again is because that that “seemingly unimportant” reporting line is not only a breach of segregation of duties on paper, it is a big issue in practical day today. I see so many large organisations, even fortune 500 companies where the CISO or the CSO is reporting to the CTO. The problem is that you think it will not happen to you, until it actually happens to you. I am very strong on segregation of duties on roles, because integrity and ethics are absolutely crucially in our industry. And having conflicting lines of reporting where you cannot govern the implementer to do their job correctly or there is no independent oversight is a burden every single day, which not only derails you from your responsibility, but also prevents you doing it ethically and with integrity at all times. Read that again.

I will keep beating the drum for the time it takes to ensure clear segregation of duties across cybersecurity, risk management, compliance and audit. This is the reason in every organisation that I have been and served as a CSO, I've organised and operated my teams according to the three lines of defence model. This is the reason why I rejected every single offer where my reporting line was in direct conflict of my responsibilities and I had the risk of not being able to serve with full integrity and ethics at all times.

🤩 Claude Code Just Got Auto Mode

A developer friend recently asked me if he could setup Claude Code in his production environment in a way, that he didn’t have to approve every action, every command, every 2 min. I told him, well technically yes, but I won’t recommend that, especially if you are just in Claude’s own sandbox in a prod environment. I was just talking about it again in my keynote last week on Agentic AI Making Million Dollar Decisions (video coming out soon on youtube.com/monicatalkscyber).

That just changed about an hour ago.

Around an hour ago, Anthropic published a blog post that most people in enterprise security will scroll past without registering what it actually means.

Claude Code now has auto mode.

Here is what that means. Until today, Claude Code's default behaviour was conservative by design but still flawed in a big way. This is not specific to Claude but to any agentic AI we have. Every file write, every bash command, every action required human approval before it ran. Rightly so. That was the safety default. But when security becomes annoying, people bypass it.

Some developers found it too slow for long-running tasks and bypassed it entirely using a flag called --dangerously-skip-permissions. The name should tell you everything you need to know about what that flag does.

Auto mode is Anthropic's answer to that gap.

A classifier reviews each tool call before it runs and checks for potentially destructive actions: mass file deletion, sensitive data exfiltration, malicious code execution. Actions the classifier considers safe proceed automatically. Risky ones get blocked. If Claude is repeatedly blocked and cannot complete the task, it eventually escalates to a human.

On the surface, that sounds like a reasonable middle path. And for developers who were using --dangerously-skip-permissions in isolated environments anyway, it is.

However, this is still just risk reduction not risk mitigation.

The question is what happens when auto mode is deployed in environments that are not isolated.

Claude Code’s auto mode classifier is not a security guarantee. It is a risk reduction.

Anthropic is explicit about this. Auto mode reduces risk compared to skipping all permissions. It does not eliminate it.

The classifier may still allow some risky actions: for example, if user intent is ambiguous, or if Claude doesn't have enough context about your environment to know an action might create additional risk. It may also occasionally block benign actions.

What I want your security team to understand is this.

A classifier that makes automated decisions about whether an agent's action is safe or risky is operating on the same fundamental assumption as every other layer of runtime control we have discussed so far in today’s newsletter edition:

The threat needs to be known at the point of execution.

However, indirect prompt injection does not work that way. Memory poisoning does not work that way. A poisoned skill that passed verification does not work that way. These threats arrive before the classifier ever sees the action. So, your auto mode reduces a certain type of risk.

Auto mode is a meaningful improvement on no mode. It is not a substitute for governance and rest of the controls I mentioned above.

Auto mode with Claude Code already has access to your file system, your code, your workflows. A classifier that clears an action as safe is not the same as a policy that says that action was authorised.

The distinction between "the AI was allowed to run" and "the organisation authorised what the AI did" is one I have been making throughout this newsletter. Auto mode makes that distinction more urgent, not less.

For CISOs, CROs, engineering leaders or anyone reading this: Your immediate question should not be whether to enable or disable auto mode. Of course, enable it, it’s better than none. But if you become complacent thinking now you are protected, that’s a bigger risk. Ask yourself, have you have mapped which workflows your agentic AI is operating on, which decision data points agentic AI is replacing or augmenting, what data it can reach, what actions it can take, whether those are controlled, who in your organisation owns accountability for the outputs it produces, and are those verified, independent of whether a classifier approved them or not.

Until next time, this is Monica, signing off!

— Monica Verma

P.S. Please follow me/subscribe on Youtube, Linkedin, Spotify and Apple. It truly helps. Or book a 1-1 advisory call, if I can help you.

***